m

msoedov/langcorn

⛓️ Serving LangChain LLM apps and agents automagically with FastApi. LLMops

apifastapilangchainlangchain-pythonlarge-language-modelsllmllmopsopenai-apirest-apivercelvercel-serverless-functions

⭐

939

Stars

🔱

71

Forks

👁

7

Watchers

📋

21

Issues

PythonMIT创建于 2023/4/14更新于 2 周前

README

由 Gemini 翻译整理

Langcorn

LangCorn 是一个 API 服务器,能够让你利用 FastAPI 的强大功能,轻松部署 LangChain 模型和流水线(pipelines),从而获得健壮且高效的开发体验。

特性

- 轻松部署 LangChain 模型和流水线

- 开箱即用的认证(auth)功能

- 基于高性能 FastAPI 框架处理请求

- 针对语言处理应用的可扩展且健壮的解决方案

- 支持自定义流水线和处理逻辑

- 文档齐全的 RESTful API 接口

- 异步处理以缩短响应时间

📦 安装

要开始使用 LangCorn,只需通过 pip 安装该包:

pip install langcorn

⛓️ 快速开始

LLM chain 示例 ex1.py:

import os

from langchain import LLMMathChain, OpenAI

os.environ["OPENAI_API_KEY"] = os.environ.get("OPENAI_API_KEY", "sk-********")

llm = OpenAI(temperature=0)

chain = LLMMathChain(llm=llm, verbose=True)

启动你的 LangCorn FastAPI 服务器:

langcorn server examples.ex1:chain

[INFO] 2023-04-18 14:34:56.32 | api:create_service:75 | Creating service

[INFO] 2023-04-18 14:34:57.51 | api:create_service:85 | lang_app='examples.ex1:chain':LLMChain(['product'])

[INFO] 2023-04-18 14:34:57.51 | api:create_service:104 | Serving

[INFO] 2023-04-18 14:34:57.51 | api:create_service:106 | Endpoint: /docs

[INFO] 2023-04-18 14:34:57.51 | api:create_service:106 | Endpoint: /examples.ex1/run

INFO: Started server process [27843]

INFO: Waiting for application startup.

INFO: Application startup complete.

INFO: Uvicorn running on http://127.0.0.1:8718 (Press CTRL+C to quit)

或者使用以下方式:

python -m langcorn server examples.ex1:chain

运行多个 chain:

python -m langcorn server examples.ex1:chain examples.ex2:chain

[INFO] 2023-04-18 14:35:21.11 | api:create_service:75 | Creating service

[INFO] 2023-04-18 14:35:21.82 | api:create_service:85 | lang_app='examples.ex1:chain':LLMChain(['product'])

[INFO] 2023-04-18 14:35:21.82 | api:create_service:85 | lang_app='examples.ex2:chain':SimpleSequentialChain(['input'])

[INFO] 2023-04-18 14:35:21.82 | api:create_service:104 | Serving

[INFO] 2023-04-18 14:35:21.82 | api:create_service:106 | Endpoint: /docs

[INFO] 2023-04-18 14:35:21.82 | api:create_service:106 | Endpoint: /examples.ex1/run

[INFO] 2023-04-18 14:35:21.82 | api:create_service:106 | Endpoint: /examples.ex2/run

INFO: Started server process [27863]

INFO: Waiting for application startup.

INFO: Application startup complete.

INFO: Uvicorn running on http://127.0.0.1:8718 (Press CTRL+C to quit)

导入必要的包并创建你的 FastAPI 应用:

from fastapi import FastAPI

from langcorn import create_service

app:FastAPI = create_service("examples.ex1:chain")

处理多个 chain:

from fastapi import FastAPI

from langcorn import create_service

app:FastAPI = create_service("examples.ex2:chain", "examples.ex1:chain")

或者:

from fastapi import FastAPI

from langcorn import create_service

app: FastAPI = create_service(

"examples.ex1:chain",

"examples.ex2:chain",

"examples.ex3:chain",

"examples.ex4:sequential_chain",

"examples.ex5:conversation",

"examples.ex6:conversation_with_summary",

"examples.ex7_agent:agent",

)

运行你的 LangCorn FastAPI 服务器:

uvicorn main:app --host 0.0.0.0 --port 8000

现在,你的 LangChain 模型和流水线已经可以通过 LangCorn API 服务器访问了。

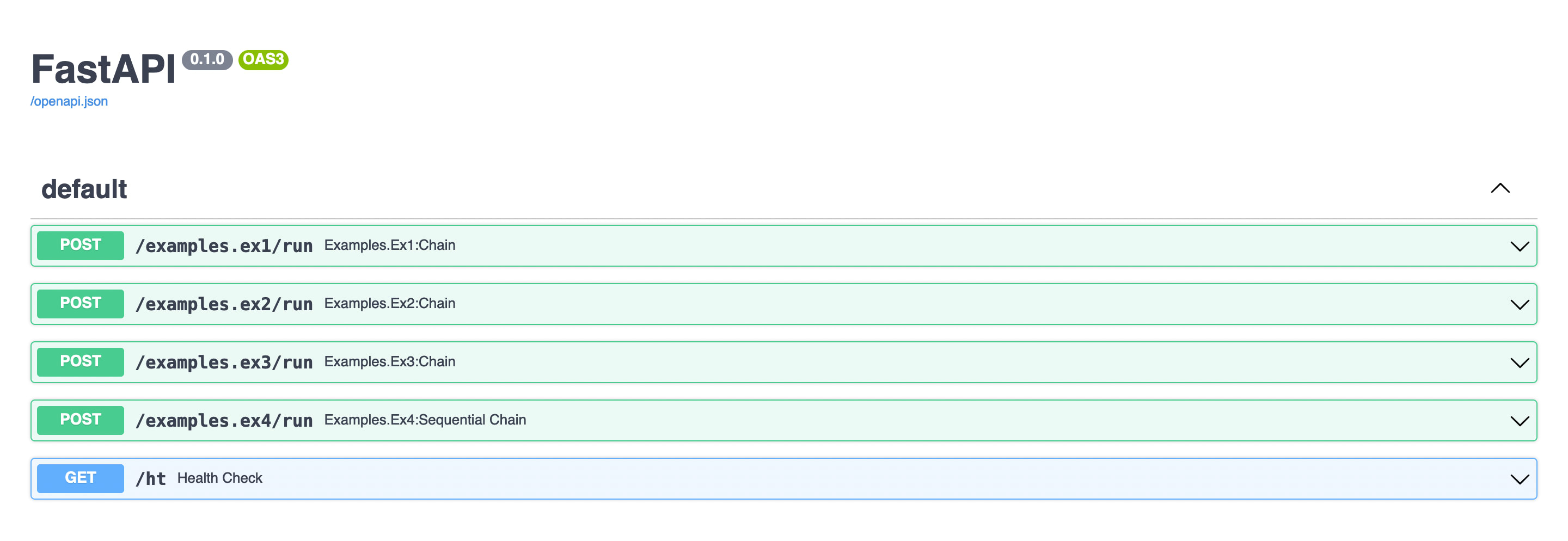

文档

自动生成的 FastAPI 文档。 在线示例 部署在 Vercel 上。

认证(Auth)

通过指定 auth_token 可以添加静态 API token 认证:

python langcorn server examples.ex1:chain examples.ex2:chain --auth_token=api-secret-value

或者:

app:FastAPI = create_service("examples.ex1:chain", auth_token="api-secret-value")

自定义 API KEY

POST http://0.0.0.0:3000/examples.ex6/run

X-LLM-API-KEY: sk-******

Content-Type: application/json

处理内存(Memory)

{

"history": "string",

"input": "What is brain?",

"memory": [

{

"type": "human",

"data": {

"content": "What is memory?",

"additional_kwargs": {}

}

},

{

"type": "ai",

"data": {

"content": " Memory is the ability of the brain to store, retain, and recall information. It is the capacity to remember past experiences, facts, and events. It is also the ability to learn and remember new information.",

"additional_kwargs": {}

}

}

]

}

响应:

{

"output": " The brain is an organ in the human body that is responsible for controlling thought, memory, emotion, and behavior. It is composed of billions of neurons that communicate with